Research

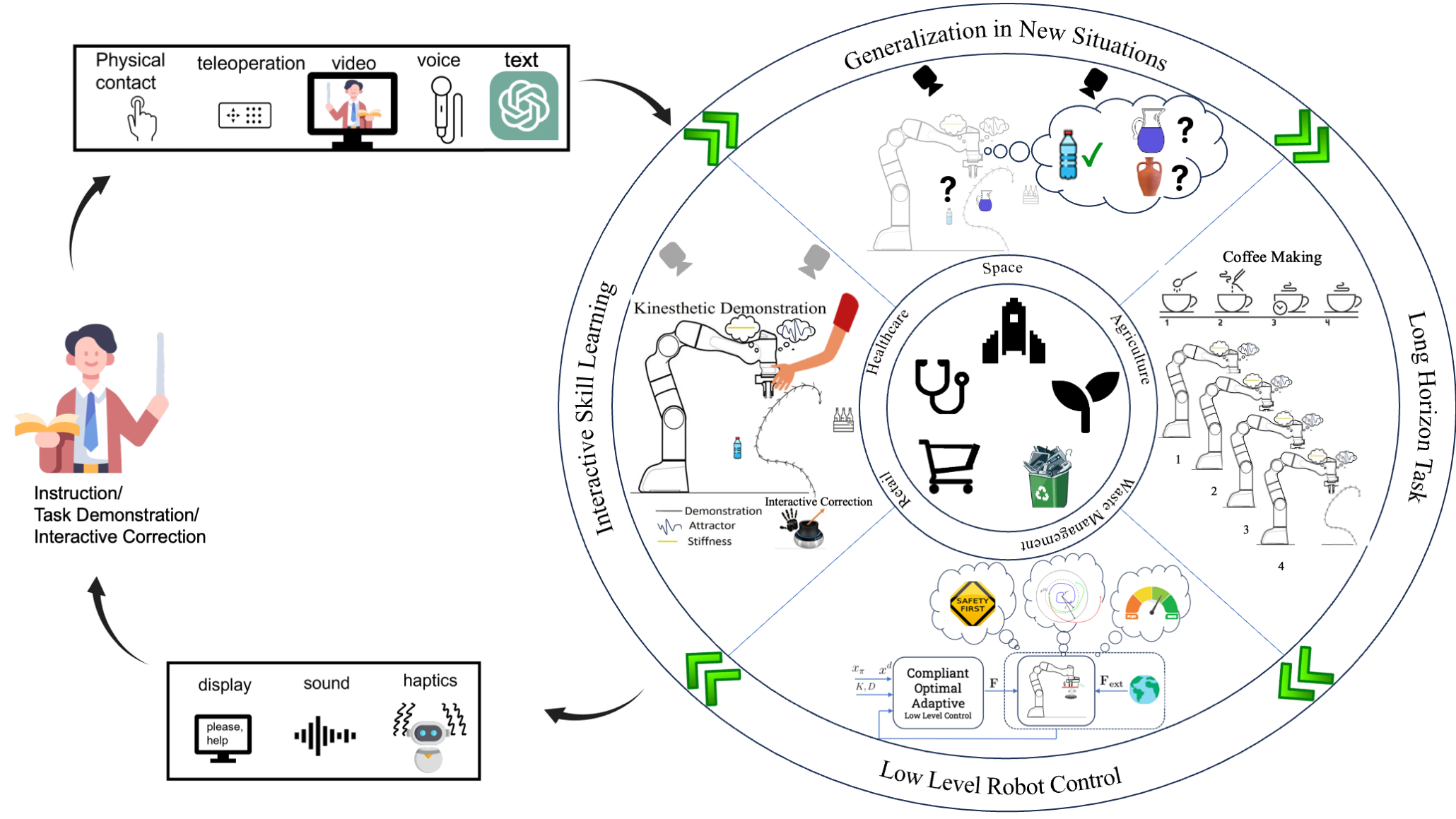

Our mission is to develop theoretical foundations and practical algorithms for human-interactive robotics. Our group focuses on enabling robots to learn from and collaborate with humans in real-world environments. We leverage tools from machine learning, control theory, and human-robot interaction to build intelligent, adaptive, and safe robotic systems.

Interactive Reinforcement Learning

We develop methods for robots to learn complex manipulation tasks through hierarchical reinforcement learning and imitation learning. Our work on Impedance Primitive-Augmented Hierarchical RL (ICRA 2025) augments high-level RL policies with impedance control primitives to solve long-horizon sequential tasks, enabling compliant and adaptive skill acquisition. We also investigate robust imitation learning — including methods that exploit mixed-quality demonstrations (Beyond the Teacher, ICRA 2026) and progress-aligned data curation (PACER, CoRL Workshop 2025) to improve generalization from imperfect human teachers, as well as stochastic encodings (RISE, IROS Workshop 2025) for robust policy learning.

Foundational Models for Robotics

We explore how large-scale pre-trained vision-language models can enable natural, flexible robot-human interaction. Our work on OVITA (Open-Vocabulary Interpretable Trajectory Adaptations, RA-L 2025) uses vision-language models to adapt robot trajectories in real time based on open-vocabulary human language instructions, providing interpretable modifications without retraining. We also develop DiffusionPack (NeurIPS Workshop 2025), a diffusion-based approach for complex bin-packing manipulation tasks that incorporates custom human preferences, bridging language, world models, and physical robot execution.

Safe and Compliant Human-Robot Interaction

Safety and compliance are essential when robots work alongside humans. Our work on SafeDMPs (ICRA 2026) integrates Signal Temporal Logic (STL)-based formal safety specifications directly into Dynamic Movement Primitives, enabling adaptive robot motions that are provably safe during physical human-robot contact. We also develop certified reinforcement learning for variable impedance control (ICRA 2026), which combines Lyapunov-based stability certificates with RL to achieve optimal, safe impedance modulation during interaction. Our stability-aware PI² framework (ICRA Workshop 2025) further extends these ideas for safe interaction under uncertainty.

Optimization and Optimal Control

We develop optimization-based and data-driven approaches for robot motion planning and control. Our Adaptive Critic framework (IEEE T-CST 2025) learns optimal controllers for uncertain robot manipulators using neural network-based value function approximation, achieving data-efficient online adaptation without explicit system identification. Our work on generalizable motion policies through keypoint parameterization and transportation maps (IEEE T-RO 2025) enables one-shot generalization of manipulation skills to novel object configurations. We also develop ST² (Sequentially Teaching Sequential Tasks, RA-M 2026), a framework for teaching robots long-horizon manipulation skills through sequential human demonstrations.

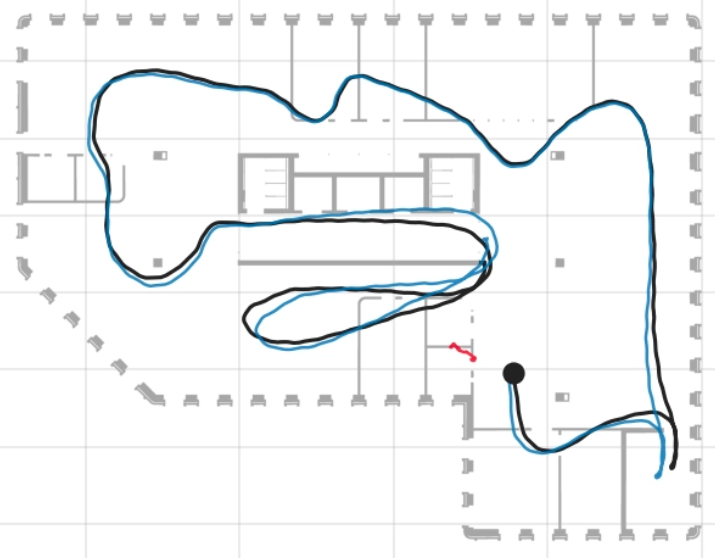

3D Reconstruction, SLAM and World Models

We develop methods for robots to build accurate 3D models of their environment through active perception. Our work includes SLAM (Simultaneous Localization and Mapping), real-time scene reconstruction, world models for spatial understanding, object-level mapping, and integration with manipulation planning to enable robots to operate effectively in complex, unstructured spaces.